It was merged after they where rightfully ridiculed by the community.

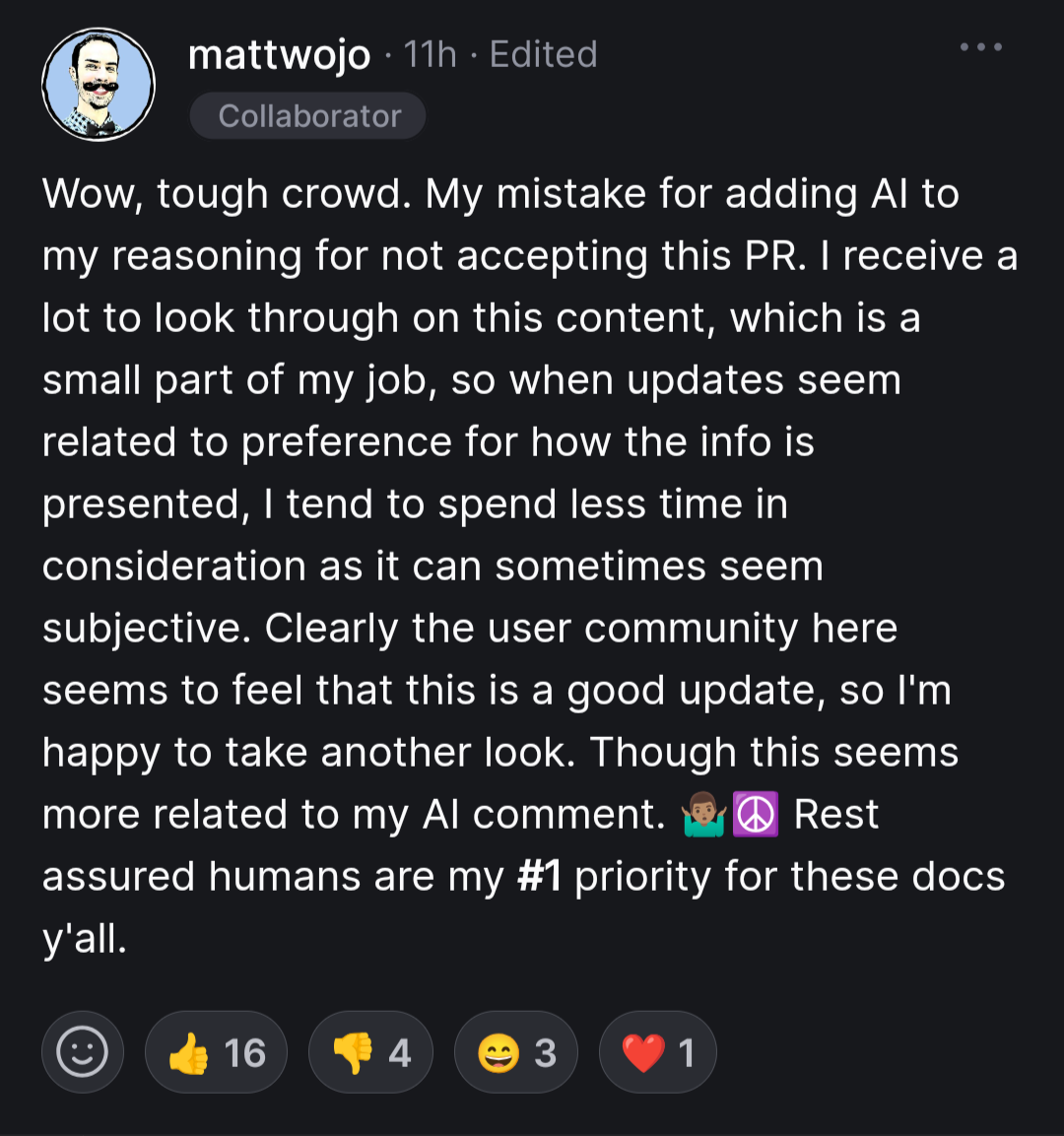

The awful response to the backlash by matwojo really takes the cake:

I’ve learned today that you are sensitive to ensuring human readability over any concerns in regard to AI consumption

LLMs will replace software engineers any day now!

Also LLMs:

Fuck AI code generation. I gave it a fair shot and it’s a waste of time that actively makes my job harder.

My employer hired a consultancy company to implement some terraform and ansible code for some cloud infra and we got in return hundreds of machine generated code lines that do not work and now are suing the consultant.

Worst part, is that the guy who reviewed this ended up writing everything himself

The more apt headline is that Microsoft doesn’t pay enough people to review their documentation PRs. The org has 572 members but nearly every PR to this repo is processed by this one guy.

They excused it as “I’m overworked” which… fair enough, but that doesn’t excuse this. I blame Microsoft, not this fella.

Even the dudes responses in those comments seem written by an AI.

He’s right that it’s probably harder for AI to understand. But wrong in every other way possible. Human understanding should trump AI, at least while they’re as unreliable as they currently are.

Maybe one day AI will know how to not bullshit, and everyone will use it, and then we’ll start writing documentation specifically for AI. But that’s a long way off.

If it can’t understand human text then is it really worth using? Like isn’t that the minimum standard here, to get context and understand from text?

I don’t have any game in this but this seems backwards and stupid… Especially since all AI currently is fancy pattern matching basically

It can understand, just not as well.

Having AI not bullshiting will require an entirely different set of algorithms than LLM, or ML in general. ML by design aproximates answers, and you don’t use it for anything that’s deterministic and has a correct answer. So, in that rwgard, we’re basically at square 0.

You can keep on slapping a bunch of checks on top of random text prediction it gives you, but if you have a way of checking if something is really true for every case imaginable, then you can probably just use that to instead generate the reply, and it can’t be something that’s also ML/random.

You can’t confidently say that because nobody knows how to solve the bullshitting issue. It might end up being very similar to current LLMs.